Director of AI at Tesla. Previously a research scientist at OpenAI and CS PhD student at Stanford. I like to train deep neural nets on large datasets 🧠🤖💥

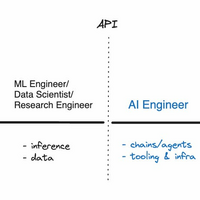

I think this is mostly right.

- LLMs created a whole new layer of abstraction and profession.

- I've so far called this role "Prompt Engineer" but agree it is misleading. It's not just prompting alone, there's a lot of glue code/infra around it. Maybe "AI Engineer" is ~usable, though it takes something a bit too specific and makes it a bit too broad.

- ML people train algorithms/networks, usually from scratch, usually at lower capability.

- LLM training is becoming sufficently different from ML because of its systems-heavy workloads, and is also splitting off into a new kind of role, focused on very large scale training of transformers on supercomputers.

- In numbers, there's probably going to be significantly more AI Engineers than there are ML engineers / LLM engineers.

- One can be quite successful in this role without ever training anything.

- I don't fully follow the Software 1.0/2.0 framing. Software 3.0 (imo ~prompting LLMs) is amusing because prompts are human-designed "code", but in English, and interpreted by an LLM (itself now a Software 2.0 artifact). AI Engineers simultaneously program in all 3 paradigms. It's a bit 😵💫

Excellent and unintuitive read on GPUs. The chip doing the compute has tiny amount of memory & is connected to the main memory literally through a straw. Most of the energy goes to data movement too. Many repercussions. E.g. latency better predicted by # activations than # flops