Calm down folks, ChatGPT isn't actually an artificial intelligence

True AI might be possible someday, but ChatGPT ain't it

Alan Turing, one of the foundational figures in computer science, was obsessed with artificial intelligence towards the end of his tragically short life. So much so, in fact, that he came up with an unofficial test for when a computer can be said to be truly intelligent like a human, what we now call The Turing Test.

The test is pretty simple. Have someone communicate with a computer and if they cannot tell that they are talking to a computer — that is, it is indistinguishable from a human to another human — then the computer would have risen to the level of human intelligence.

Well, ChatGPT sure seems to pass that test with flying colors. In fact, it's not just passing Turing's test, it's passing med school exams, law school exams, and pretty much does the homework for every kid in the United States at the very least.

And if you don't know what ChatGPT is, it can seem overwhelming. So much so that people are starting to project onto ChatGPT, and generative AI in general, qualities and human characteristics that it actually doesn't have.

Normally, this wouldn't be too big a deal. People misunderstand all kinds of things all the time, but because ChatGPT-like AIs are only going to become more widespread in the coming months, people are going to invest in them powers that they don't actually have, and if misused under that assumption, they can be far more harmful than they are helpful.

What is an adversarial generative AI?

The foundational technology behind ChatGPT, Stable Diffusion, and all the other AIs that are producing imagery, test, music, and more is what's known as a Generative Adversarial Network (GAN). I won't get too in the weeds here, but essentially a GAN is two software systems working together. One is producing an output, the generator, and the other is determining if that data is valid or not, the classifier.

The generator and classifier in a GAN move word-by-word or pixel-by-pixel and essentially fight it out to produce a consensus before moving on to the next segment. Bit by bit (literally), a GAN produces an output that very closely replicates what a human can do, creatively.

Get daily insight, inspiration and deals in your inbox

Sign up for breaking news, reviews, opinion, top tech deals, and more.

The generator relies on an obscene amount of input data that it "trains" on to produce its outputs, and the classifier relies on its own inputs to determine if what the generator produced makes sense. This may or may not be how human intelligence "creates" new things — neurologists are still trying to figure that out — but in a lot of ways, you can tell the tree by the fruits it bears, so it must be achieving human-level intelligence. Right? Well...

ChatGPT passes the Turing test, but does that matter?

When Turing came up with his test for artificial intelligence, he meant having a conversation with a rational actor such that you couldn't tell you were talking to a machine. Implicit in this is the idea that the machine was understanding what you were saying. Not recognizing keywords and generating a probabilistic response to a long set of keywords, but understanding.

To Turing, conversing with a machine to the point where it was indistinguishable from a human reflected intelligence because creating a vast repository of words with various weights given to each depending on the words that precede it in a sentence just wasn't something anyone could have conceived of at the time.

The kind of math required for that kind of calculation, on machines that took an entire day to perform calculations the cheapest smartphone can do in nanoseconds, would have looked as intractable as counting the number of atoms in the universe. It wouldn't have even factored into the thinking at the time.

Unfortunately, Turing didn't live long enough to foresee the rise of data science and chatbots or even the integrated circuit that powers modern computers. Even before ChatGPT, chatbots were well on their way to passing the Turing test as commonly understood, as anyone using a chatbot to talk to their bank can tell you. But Turing would not have seen a chatbot as an artificial mind equal to that of a human.

A chatbot is a single-purpose tool, not an intelligence. Human-level intelligence requires the capacity to go beyond the parameters that its developers set for itself, of its own volition. ChatGPT is preternaturally adept at mimicking human language patterns, but so is a parrot, and no one can argue that a parrot understands the meaning behind the words it is parroting.

ChatGPT passing the Turing test doesn't mean that ChatGPT is as intelligent as a human. It clearly isn't. All this means is that the Turing test is not the valid test of artificial intelligence we thought it would be.

ChatGPT can't teach itself anything, it can only learn what humans direct it to learn

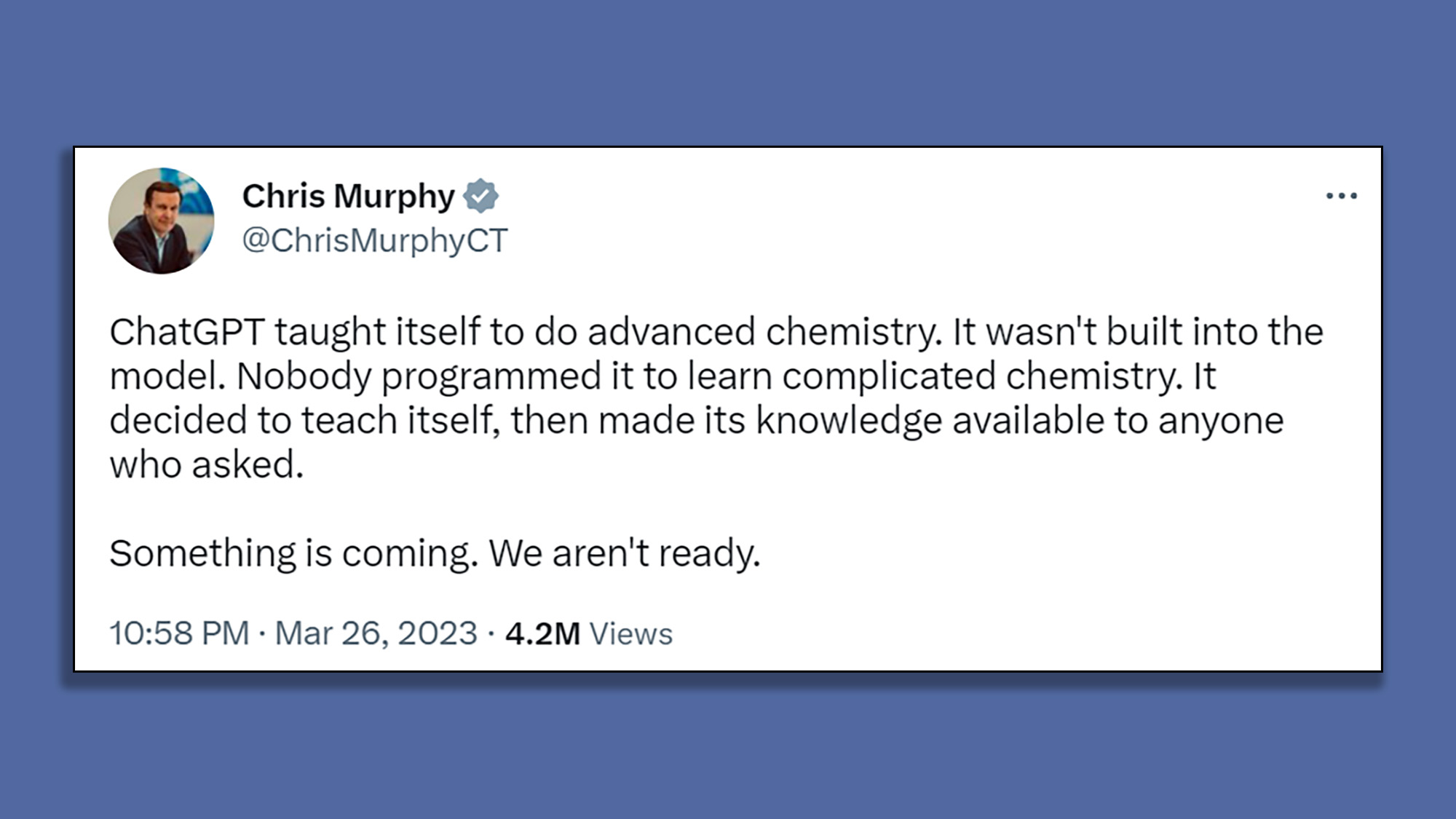

This week, US Senator from Connecticut Chris Murphy said on Twitter that ChatGPT taught itself chemistry of its own accord without prompting from its creators. Unless Sen. Murphy knows something that hasn't been made public, ChatGPT as we know it can do no such thing.

To be clear, if ChatGPT or any other GAN sought out and learned something on its own initiative, then we have truly crossed over into the post-human world of Artificial General Intelligence since demonstrating independent initiative would unquestionably be a marker of true human-level intelligence.

But if a developer tells a bot to go out and learn something new and the bot does as it's asked, that doesn't make the bot intelligent.

Doing as you're told and doing something of your own will may look alike to an outside observer, but they are two very different things. Humans intuitively understand this, but ChatGPT can only explain this difference to us if it has been fed philosophy texts that discuss the issue of free will. If it's never been fed Plato, Aristotle, or Nietzsche, it's not going to come up with the idea of free will on its own. It won't even know that such a thing exists if it isn't told that it does.

There are reasons to worry about ChatGPT, but it becoming 'intelligent' isn't one of them

I've gone on for a while about the dangers of ChatGPT, mostly that it cannot understand what it's saying and so it can produce defamatory, plagiarized, and downright incorrect information as fact.

There is a broader issue of ChatGPT being seen as a replacement for human workers, since it sure looks like ChatGPT (and Stable Diffusion, and other generative AIs) can do what humans can do more quickly, more easily, and for much cheaper than human labor. That's a discussion for another time, but the social consequences of ChatGPT are probably the biggest danger here, not that somehow we're building SkyNet.

ChatGPT, at its core, is a system of connected digital nodes with averages assigned to each that produces a logical output given an input. That isn't thought, it's literally just math that humans have programmed into a machine. It might look powerful, but the human minds that made it are the real intelligence here, and that shouldn't be confused.

John (He/Him) is the Components Editor here at TechRadar and he is also a programmer, gamer, activist, and Brooklyn College alum currently living in Brooklyn, NY.

Named by the CTA as a CES 2020 Media Trailblazer for his science and technology reporting, John specializes in all areas of computer science, including industry news, hardware reviews, PC gaming, as well as general science writing and the social impact of the tech industry.

You can find him online on Bluesky @johnloeffler.bsky.social